The 2026 Apple Intelligence and Siri overhaul is coming, and the Playlist Playground in iOS 26.4 shows that Apple will continue to treat AI as a background tool, not a flagship.

If you’ve been paying attention, Apple’s AI strategy has always been about keeping it in the background. It augments human users rather than replacing or robbing them.

System-wide access to controls through app intents and a more personalized Siri won’t or will be game-changing, depending on the user’s workflow. Apple doesn’t treat AI as some world-changing paradigm that must overcome every part of the product.

The key differentiator remains. Even as AI expands within Apple’s ecosystem, users will be able to ignore it if they wish.

On Monday, iOS 26.4 showed further evidence of this approach with a new AI feature called Playlist Playground. It is a new tool that will allow users to create playlists in Apple Music.

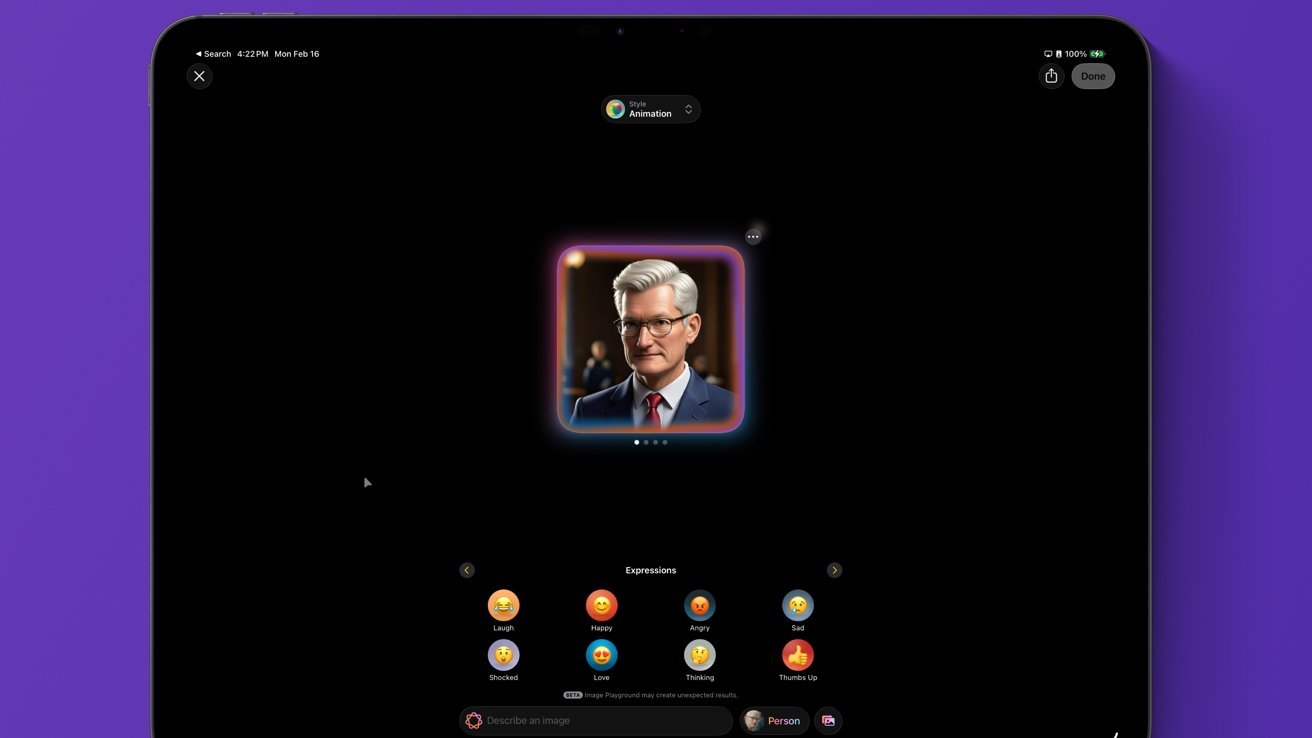

Until now, Apple’s approach to generative AI has locked them into specific feature sets tied to broader creative tools. Image Playground is part of writing notes or presentations, while writing tools are part of creating documents.

Even with the advent of AI, Siri’s relationship with the user has not changed

So far, Apple hasn’t stepped in with a feature that says “you can create art from nothing” as a primary feature, so an “out of the box” use of the tool. Even Logic’s AI tools and Xcode’s AI connections are meant to be additive.

The virtually useless output from Image Playground makes the whole tool look more like a toy than a serious interface. Xcode itself doesn’t have tools for generative code, but Apple has given users the ability to bring in external models that can create code from scratch.

If users choose to use Xcode this way – it’s an option that’s available. However, this pure form of generating code from text prompts is not an Apple Intelligence tool, and that’s a distinction worth making.

Apple doesn’t avoid these features, but also artificially limits them from working in this way by using tokens with disproportionately low usage. Server computing time costs money.

Users can manipulate Apple’s AI tools to be completely generative, but in this case they are not efficient and are often subpar compared to competitors in the space. You can create something completely new, but there are better tools for that particular use case outside of Apple’s ecosystem.

Playlist Playground is another example of this approach. You don’t generate music and you don’t remove the need for human curation.

It’s just a new tool to extend an existing feature set.

Apple Intelligence is a hardware and software solution related to Apple’s chip manufacturing capabilities

We’re sure to see more examples of how Apple thinks AI should look and work in the coming months. As I’ve said many times before, I expect Apple’s approach to AI to be unique and break through all the hype, noise and complete lack of profitability surrounding the technology.

Generating playlists is an obvious and straightforward implementation. Users may not even realize they are using AI when the tool is at work, especially if it is interacted with through Siri.

There’s a solid throughline from Beats Music’s machine learning-based “The Sentence” and this LLM-based Playlist Playground. In another timeline, Apple wouldn’t have labeled this feature as AI, but instead it would have been just another feature.

If users want to go beyond Apple’s AI implementation, they can. As with Xcode and Image Playground allowing the use of external models, Apple will give users this option.

However, this basic level of Apple Intelligence seems to rely on the idea of augmenting humans in an ethical way. Maybe it’s debatable, but I expect it to appear as a through line with Apple’s AI release next year.

We’ve heard Apple executives say this before, and examples like the ones above reinforce the concept. Apple wants AI to be a tool that works in the background and that users don’t even realize is being used when something is being done.

This, combined with Apple’s privacy, security and ethical execution of AI, is sure to be a winning formula in the long run. Whatever the case, users don’t really seem to care, and Apple keeps breaking financial records without AI.

Apple Intelligence Restart Timing

Apple Music’s new playlist generation feature may be a glimpse into Apple’s upcoming Intelligence pipeline, but it claims more is coming. While tech fans are bracing themselves for the aforementioned delay, Apple has yet to confirm anything.

Apple Intelligence will relaunch with more powerful Apple Foundation models

Previous reports suggested that iOS 26.4 would act as a starting point for Apple’s new AI initiatives. However, that was attached to a spring release window, while iOS 26.4 appears to be targeting a March release.

Apple’s seasonal launch windows are a bit odd, but “spring” tends to be late March or April. This timeframe would correspond to iOS 26.5, where Apple’s new AI features could finally be revealed.

However, Apple also revealed a March 4 media event that is most likely for the iPhone 17e. It could also serve as a soft launch point for the new Apple Foundation Models that were trained by Google Gemini.

These speculations, combined with this AI-related Apple Music feature, suggest that we are not far from seeing this redesigned Siri and Apple Intelligence. Whether this is a late beta version of iOS 26.4 or part of iOS 26.5 remains to be seen.

Be that as it may, there is still no external evidence of a major delay in Apple’s AI efforts. Things may be moving slower than some would like, but “arriving in 2026” is a fairly large window that Apple seems ready to meet.