A team of Apple researchers set out to understand what real users expect from AI agents and how they would prefer to interact with them. Here’s what they found.

Apple explores UX trends for the era of AI agents

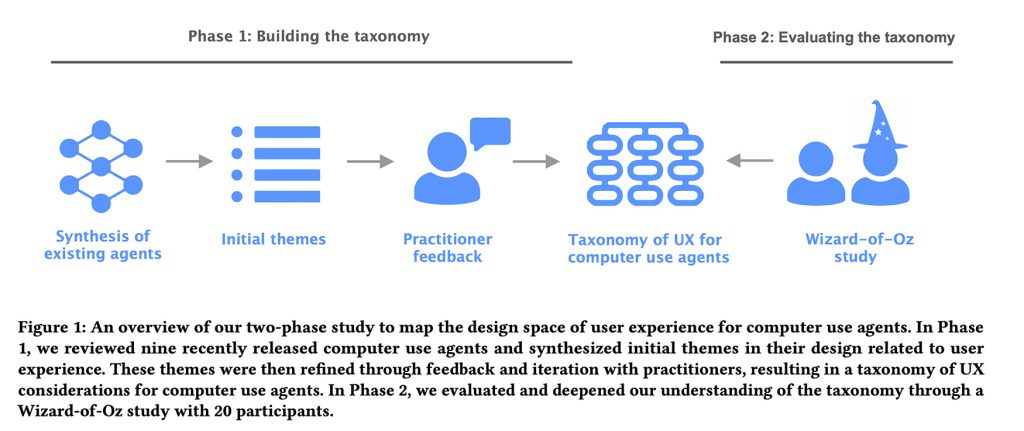

In a study titled Mapping the Design Space of User Experience for Computer Use Agents, a team of four Apple researchers report that while the market has invested heavily in the development and evaluation of AI agents, some aspects of the user experience have been overlooked: how users might want to interact with them and what those interfaces should look like.

To explore this, they divided the study into two phases: first, they identified the main UX patterns and design considerations that AI labs built into existing AI agents. They then tested and refined these ideas using hands-on user studies with an interesting method called the Wizard of Oz.

By observing how these design patterns hold up in real-world user interactions, they were able to identify which current AI agent designs meet user expectations and which fall short.

Phase 1: Taxonomy

The researchers examined nine agents for desktop, mobile and web, including;

- Claude Computer Use Tool

- Adept

- OpenAI operator

- Alice

- Magentic-UI

- UI-TARS

- Project Mariner

- TaxiAI

- AutoGLM

They then consulted with “8 experts who are designers, engineers, or researchers working in the UX or AI domains at a large technology company,” which helped them map out a comprehensive taxonomy with four categories, 21 subcategories, and 55 sample features covering key aspects of UX behind computer-based AI agents.

The four main categories included:

- User question: how users enter commands

- Explainability of the agent’s actions: what information to present to the user about the agent’s actions

- User control: how users can intervene

- Mental model and expectations: how to help users understand the agent’s capabilities

Essentially, this framework covered everything from the interface aspects that allow agents to present their plans to users, to how they communicate their capabilities, detect errors, and allow users to intervene when something goes wrong.

With all that in hand, they moved on to Phase 2.

Phase 2: The Wizard of Oz Study

The researchers recruited 20 users with previous experience with AI agents and asked them to interact with the AI agent via a chat interface and perform either a vacation rental task or an online shopping task.

From the study:

Participants were provided with a mock user chat interface through which they could interact with an “agent” played by the researcher. Meanwhile, the participant was also presented with an agent execution interface where the researcher acted as an agent and interacted with Ul on the screen based on the participant’s command. In the chat user interface, participants could enter textual questions in natural language, which then appeared in the chat thread. Then the “agent” began execution where the researcher controlled the mouse and keyboard at their end to simulate the agent’s actions on the web page. When the researcher completed the task, he entered a hotkey that posted a “task complete” message to the chat thread. During execution, participants could use the interrupt button to stop the agent and an “agent interrupted” message appeared in the chat.

In other words, unknown to the user, the AI agent was actually a researcher sitting in the next room who would read the text instructions and perform the required task.

For each task (vacation rental or online shopping), participants were asked to perform six functions with the help of an AI agent, some of which the agent would either intentionally fail (for example, get stuck in a navigation loop) or make intentional mistakes (for example, select something other than what the user instructed).

At the end of each session, the researchers asked participants to reflect on their experience and suggest features or changes to improve the interaction.

They also analyzed video footage and chat logs from each session to identify recurring themes in user behavior, expectations, and agent interaction pain points.

Main findings

When all was said and done, the researchers found that users wanted to have an overview of what the AI agents were doing, but not to micromanage every step, otherwise they could perform the tasks themselves.

They also concluded that users want different agent behaviors depending on whether they are exploring options or performing a familiar task. Similarly, user expectations change depending on whether they are familiar with the interface. The more unknown they were, the more they wanted transparency, intermediate steps, explanations, and confirmation pauses (even in low-risk scenarios).

They also found that people want more control when actions have real consequences (such as making purchases, changing account or payment information, or contacting other people on their behalf), and they also found that trust quickly crumbles when agents make tacit assumptions or mistakes.

For example, when the agent encountered ambiguous choices on a page or deviated from the original plan without clearly indicating it, participants instructed the system to pause and ask for an explanation rather than just choosing something seemingly at random and moving on.

In the same vein, participants reported discomfort when the agent was not transparent about a particular choice, especially if that choice could lead to the wrong product being selected.

All in all, this is an interesting study for app developers looking to implement agent features in their apps, and you can read it in full here.

Accessories offer on Amazon

FTC: We use automatic income earning affiliate links. More.