After several years of rumors about the feature, Apple added live translated subtitles to FaceTime in iOS 26, allowing one-on-one calls to display real-time subtitles in different languages. Here’s where to find FaceTime Live Translation and how it happened.

Live translation in FaceTime is a big news, but many users don’t even know it’s there. This is because Apple does not provide a clear option or an easy translation option.

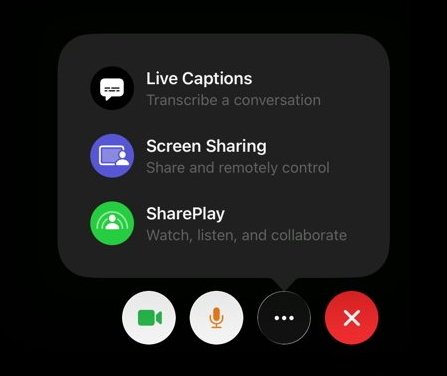

Additionally, you won’t find the translation feature in the Apple Intelligence settings or FaceTime menus where you might expect it. Instead, it’s tucked away in live transcripts, a convenience feature that’s been around for years.

As a result, even people who use accessibility features may not have had reason to notice this new addition. But it’s worth knowing about because it’s a boon in many different situations, and while there are still limitations, Apple has implemented it well.

What happens is that during a FaceTime call, the system listens to the other person’s speech and converts it to text in your preferred language. Both participants can speak normally without any changes in the audio stream.

FaceTime never replaces voices with synthesized speech or embeds translated audio, so the original speaker sound remains intact.

Apple offers a feature that adds visual context to calls, so you can hear the person with their tone, pace, and emotion intact. At the same time, you can read a real-time translation of what they are saying.

However, to use translated subtitles, live subtitles must be enabled in advance.

- Open it Settings application on your device.

- Tap on Accessibility.

- Choose Live subtitles.

Once you enable this setting, FaceTime can display subtitles during one-on-one calls. If your device, language and Apple Intelligence requirements are met, you can even get subtitles translated.

Why people expected FaceTime translation long before it arrived

FaceTime feels like the perfect place for live translation because it’s Apple’s most personal communication tool. It is designed for real-time chatting, capturing facial expressions and understanding emotional nuances.

Live transcripts in Settings

Expectations continued to rise as Apple introduced translation tools into more of its ecosystem. Push started with the Translator app and gradually expanded to Safari websites, Messages conversations, and phone call transcription.

From a user’s perspective, FaceTime seemed like an obvious missing piece. The lack of translation felt less like a technical limitation and more like an unexplained omission, perhaps a marketing one.

Apple never gave a clear reason for the delay, so it was assumed that the technology simply wasn’t ready. In fact, it seems that Apple waited to add translation without changing the basic experience of using FaceTime.

Why Apple has avoided translating FaceTime for so long

Real-time translation during live video calls is much more difficult than for written text because natural conversations are messy and unpredictable. They often have overlapping speech, interruptions, and subtle timing that we instinctively rely on.

Early translation systems used synthesized speech to replace spoken audio, which worked well in controlled settings. However, in real conversations, even a slight delay can lead to people talking over each other or awkward pauses.

Then simply replacing someone’s voice can make conversations mechanical and disconnected, which is the opposite of what FaceTime wants to offer. Apple usually avoids adding features that change the basic feel of its products unless they feel natural.

In the case of FaceTime, translated audio would change the experience in a way that Apple seemed unwilling to accept. Instead, the company stuck with FaceTime’s original design to preserve the intended emotional connection, and then added to it.

Why subtitles instead of translated audio

Apple’s solution was to avoid translated audio altogether and instead rely on subtitles. Using subtitles allows the system to tolerate small delays without disrupting the natural rhythm of the conversation.

When audio is out of sync with facial movement, the disconnect is immediately noticeable and uncomfortable. Even a small delay can make a conversation feel awkward in a way that people instinctively respond to.

Text works differently, as the credits can appear moments later without disrupting the flow or emotional continuity. Readers naturally explain this delay without feeling that something is wrong.

Subtitles also allow Apple to keep the entire translation channel on the device. Speech recognition, language modeling and translation all run locally instead of relying on cloud processing.

Translated audio would require buffering, voice synthesis, and tight timing between the two participants. These extra steps add complexity and create more chances for something to go wrong.

The use of subtitles fits neatly into the wider philosophy of Apple Intelligence. The goal remains focused on improving understanding while keeping the human presence at the center of the interaction.

How Apple Intelligence handles FaceTime translation

FaceTime translation refers to the same Apple Intelligence channel used in iOS, iPadOS, and macOS. On-device speech recognition converts spoken language to text in real time.

The system then sends this text through a conversational translation model. Furthermore, the model prioritizes intent and readability over strict word-for-word accuracy.

Translated subtitles appear on screen and move dynamically so they don’t cover surfaces or interface elements. Missing language models are automatically downloaded before translation starts.

The audio flow remains intact throughout the call, so tone, pace and emotional nuance remain intact from start to finish.

When translated subtitles are actually useful

FaceTime Translation works best when both people already want to use FaceTime and just need help understanding each other’s words. It is not intended to replace a shared language from the ground up.

Short or mid-length calls tend to shine, especially when tone matters more than precise phrasing. Catching up with family overseas fits naturally into this pattern. Check-in with an international associate also works well.

Speed calls related to travel fall into the same category. At these times, subtitles reduce friction without changing the feel of the call.

Text subtitles can tolerate a slight delay without disrupting the flow of the call

Longer meetings, lecture-style calls, or situations that require constant visual attention do not play to the feature’s strengths.

Accuracy limits and what Apple optimizes for

Translated subtitles prioritize meaning over literal accuracy, with Apple’s models focusing on conveying intent over precise phrasing. Prioritizing intent works well for casual conversation where clarity is more important than accuracy.

Idioms, slang, regional dialects and quick back and forth exchanges can still cause problems. Subtitles may lag slightly or simplify phrasing to keep the conversation moving.

The system helps with general understanding, but does not belong in legal, medical or other high-stakes conversations.

Hardware requirements and why Apple makes hard lines

Translated subtitles require hardware that supports Apple Intelligence, and unsupported devices never display this option in FaceTime. This feature will remain hidden even if these devices are running the same operating system versions.

On iPhone, support begins with iPhone 15 Pro and iPhone 15 Pro Max. Standard iPhone 15 models and older phones are omitted due to performance limits. iPad support includes iPad Pro models with M1 chips or later.

iPad Air models with M-series processors also qualify. iPad mini models using the A17 Pro are also cut. However, older iPads built on earlier A-series chips don’t have enough processing space.

Mac support is limited to Apple Silicon systems only. Intel-based Macs cannot use Apple Intelligence features, including Live Translation, without serious compromises in performance or battery life.

Why this experience works better on iPad and Mac

Screen size really affects how comfortable subtitles are, especially during long calls where you need to keep looking at the screen. On an iPhone, subtitles have to share space with video, controls, and you’re holding the phone, which can make long chats exhausting.

It’s like trying to juggle too many things at once and your eyes get tired. So a bigger screen can make a big difference in how easy it is to watch.

On iPads and Macs, subtitles have more visual space so they remain legible without obscuring faces. Longer conversations are much more comfortable as a result.

What Apple still hasn’t solved

Despite its strengths, FaceTime Translation remains limited in several important ways. Group calls are not supported and the list of available languages is still narrow.

Both participants must also speak supported languages for subtitles to appear. Saving or exporting translated transcripts is currently not possible.

The lack of transcripts reduces the usefulness for tracking and reference, especially for accessibility users. Apple’s approach reflects caution rather than neglect, leaving open how quickly these gaps will be closed as expectations continue to rise.