Summary created by Smart Answers AI

In summary:

- Macworld reports that a new Apple research paper introduces Principled Coarse-Graining (PCG), a method to speed up Siri speech token generation while maintaining quality.

- Technical groups use acoustic similarity tokens together to avoid unnecessary processing rigor that slows down current systems.

- This breakthrough could lead to a significantly faster and more responsive Siri, addressing user complaints about the assistant’s slow performance.

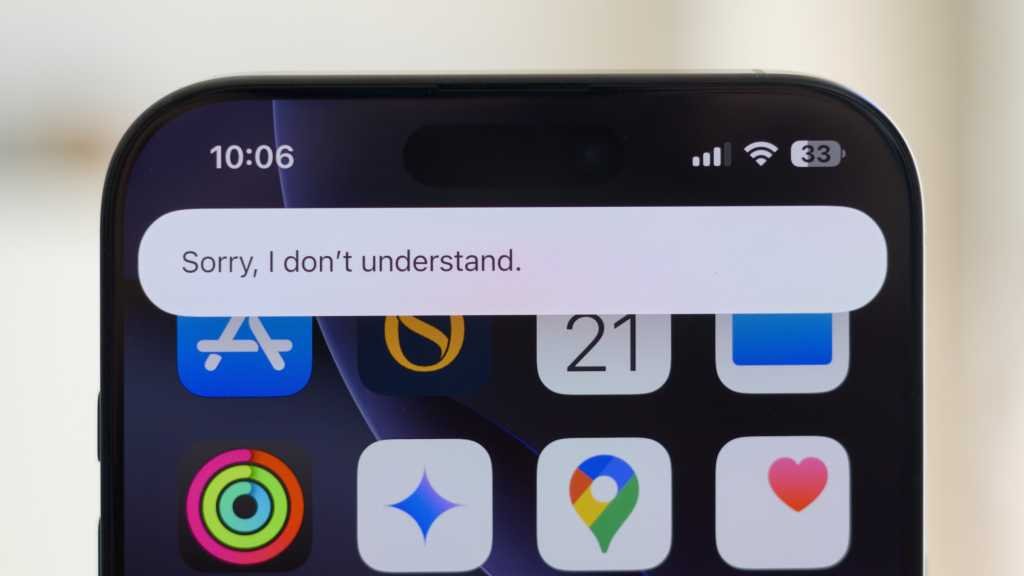

Hopes for a more accurate and functional Siri voice assistant are currently leaning heavily on a short-term fix: Apple’s recently announced partnership with Google to use Gemini technology to improve its own AI offerings. In the long run, however, a new research paper offers a method that would allow Apple to speed up Siri all on its own.

paper, Principled coarse-grained acceptance for speculative decoding in speechwas written by five researchers working for Apple and Tel-Aviv University and published late last month (via 9to5Mac). It proposes a new approach that could, in the researchers’ words, “accelerate the generation of speech tokens while preserving speech quality.”

The key to speed, the researchers say, is to avoid unnecessary rigor. “For speech LLMs that generate acoustic tokens,” they write, “exact token matching is too restrictive: many individual tokens are acoustically or semantically interchangeable, reducing acceptance rates and limiting speedup.” In other words, at a certain level of similarity it does not matter which of the two possible speech tokens is chosen because they sound or mean essentially the same thing, and it is a waste of time and processing resources to insist on figuring out which one is correct.

A proposed solution is to group acoustically similar tokens together.

“We propose Principled Coarse-Graining (PCG), a framework that replaces exact token matching with group-level authentication,” the paper explains. “We create Acoustic Similarity Groups (ASGs) in the token embedding space of the target model, capturing its internal organization of semantic and acoustic similarity. PCG performs speculative sampling on a coarse-grained distribution over the ASG and performs rejection sampling at the group level.”

Researchers say this will increase speed without significantly reducing reliability. In experiments (see page 4 of the article), increasing the number of tokens per second decreases the accuracy slightly, but much less than with standard speculative decoding.

The paper is rather technical, but not too long. Check out the pdf to read the whole thing.