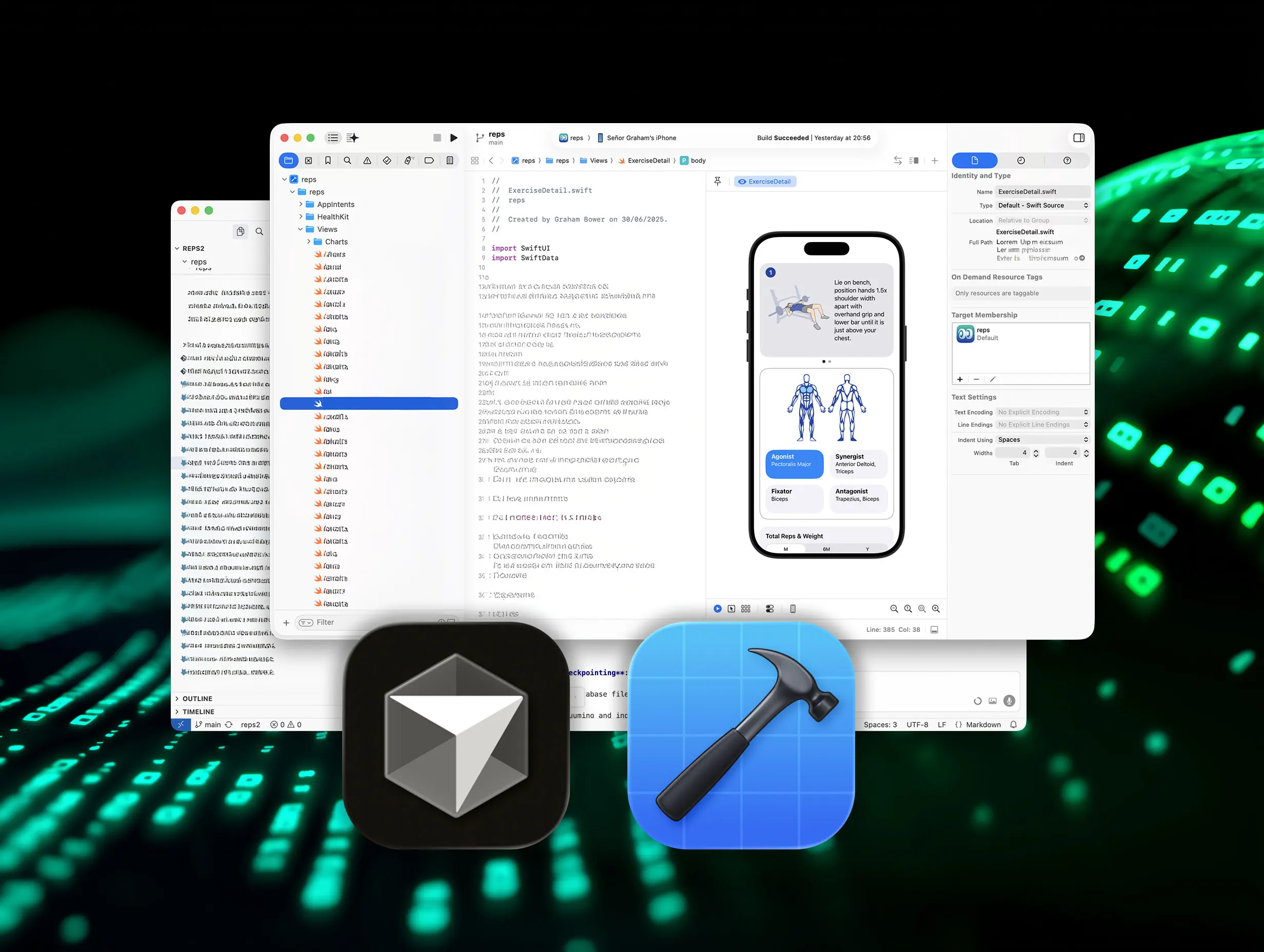

A year ago, I had no idea how to write an iPhone app. Now I’ve delivered a full-fledged strength training app, built using AI coding tools, or “vibration coding” as it’s become known.

Many people miscode vibrations. They think it’s just for prototyping and tinkering. it isn’t. Used correctly, it is a skill that can be learned and mastered. And with modern AI tools like Cursor and the new Coding Assistant in Xcode, it’s now more accessible than ever.

So, if you’re curious about vibration coding and want to try it out, here are ten lessons I learned the hard way.

Mastering the art of vibration coding

Vibe coding is rapidly changing the way software is produced. It allows you to build apps without manually writing the code yourself. Instead, an AI assistant (sometimes called an “agent”) will generate the code for you. In practice, this means describing what you want in a ChatGPT-like chat interface built into coding tools like Xcode or Cursor.

It may seem silly to say that it’s a skill you can learn. After all, don’t AI assistants do the hard work?

Well yes and no. Modern models can generate entire applications. But how you write the prompts, evaluate the results, and manage the project is critical.

So what skills did I actually bring to this?

I’m a graphic designer, not a developer. I can write HTML and CSS, but I’ve never built a native app before. I have two decades of experience designing apps and websites and working closely with developers. I know how to brief technical people and I’ve learned how engineers think.

Fourteen years ago, my partner and I designed an older version of Reps & Sets. So I had a deep understanding of the product. I even reused the original data model as a starting point. But I didn’t know Swift and I’ve never shipped an app myself.

At the time I started this project, Xcode’s AI capabilities were limited, so I used Cursor as my AI coding environment along with models like Claude and GPT. I still relied on Xcode for asset management, debugging, testing, provisioning and submitting to the App Store.

Top 10 tips for successful vibration coding

Tip 1: Do your homework

You don’t need programming knowledge to use the AI coding assistant. But you have to understand what it does for you.

Before I started, I spent several hours in Apple’s free Swift Playgrounds tutorials. I also dove into WWDC sessions to understand how iOS apps are structured and how Apple’s user interface components fit together.

Think of it like watching football. You don’t have to play the game, but if you don’t know the rules, you won’t understand what’s going on on the field.

Tip 2: Think big and small

Applications are complex. Trying to describe thousands in one challenge would take words and only confuse your AI assistant. Long challenges dilute attention spans. Important details will be skipped. Think about your project in big chunks, but brief your AI assistant on the small ones.

As you break down the elements, you will find that there is a logical order to their assembly. In my case, I had to create an exercise card before I could create exercise logging because exercise depends on exercise. And even the exercise card wasn’t a single task. It had to be broken down further: list all exercises, show illustrations, filter by muscle group and so on.

Tip 3: Ask questions

You don’t always have to tell your AI assistant what to do. Sometimes it’s better to ask.

If they change a file you didn’t expect, ask for an explanation. Sometimes there is a good reason. Sometimes it isn’t. Either way, you’ll learn something. And if you’re not sure how to implement a feature, ask about options before committing to one. The Assistant often suggests approaches you might not have considered.

In Xcode, click the lightning bolt icon in the Coding Assistant window to disable “Apply code changes automatically”. This allows you to explore ideas and ask questions without the assistant touching your code until you’re ready. In the cursor, set the agent to Ask mode.

Tip 4: Use clear language

Most of the time we don’t just describe the problem, we frame it. And within that framing are hidden assumptions about what’s going on.

AI models are extremely sensitive to language. They don’t just read our words. They derive intent from how we structure them. If the prompt subtly assumes what the problem is, the model will often follow that path, even if it’s wrong. For example, you might describe the error in a way that inadvertently narrows the search space. AI then optimizes within this framework rather than challenging it.

Pure language is a technique I learned years ago on a coaching course. Pure language originates from psychotherapy and counseling, but is increasingly used in business and education. The idea is simple: describe what you observe without inserting an interpretation. Give the other side, human or machine, space to think.

It turns out that what works in psychotherapy works surprisingly well in vibrational coding.

Tip 5: Provide context

Don’t just tell your AI assistant what to build. Tell me why.

When people build software, they make dozens of small judgments: naming functions, structuring data, choosing default values. These decisions only make sense if they understand the purpose of the function. The same goes for AI.

When you explain why something matters, the AI assistant can make better decisions on its own. And sometimes they surprise you. For example, when I added the ability to specify the incline of a gym bench, Claude set the range from -20º to 90º. I didn’t specify that. When I asked why, it explained that it was a standard range adjustable bench.

Because I described the real world context, it filled in the gaps correctly. Context doesn’t just improve code. Unlocks wider knowledge of the model.

Tip 6: Provide background material

Artificial intelligence models are trained on past data. Apple’s frameworks, especially SwiftUI, are evolving rapidly. This creates a gap. There just isn’t as much high-quality, up-to-date Swift training data available for LLM as there is for older languages like Python or JavaScript. As John Gruber recently pointed out, Swift 6 clearly highlights this problem.

When adding the liquid glass feature to my app, Claude insisted that iOS 26 didn’t exist. The fix was not to argue with it, but to provide better context. Once I asked Claude to search the web for the relevant documentation, he implemented the feature without any problems.

When working with new APIs, include links to relevant WWDC sessions in the prompt and ask the assistant to base their solution on the transcript. For example, when I implemented training mirroring between iPhone and Apple Watch, including the exact WWDC video, it dramatically improved the results.

AI can only work with what it knows or what you give it.

Tip 7: Consult different models and escalate

If you get stuck, don’t assume the model is correct. Get a second opinion.

Different AI models have different strengths, training data, and reasoning styles. If one is struggling with a bug, another can immediately recognize the problem. It’s like bringing a new pair of eyes. For most tasks I found Claude worked best, but when I had problems I turned to GPT.

It’s also worth remembering that coding assistants and chat assistants don’t always behave the same, even though they’re powered by similar models. Sometimes I talked about the problem in ChatGPT and then I shared this thought with Claude. The combination often worked better than either alone.

And when all else fails, escalate. More advanced models are slower and more expensive, but can offer deeper reasoning when dealing with complex architecture or subtle bugs.

Tip 8: Use README files

When you build something complex, eventually you hit a wall. Rewrite the prompt. You alternate models. It won’t solve anything.

In my case, the app’s UI gradually got slower and slower until it was almost unusable. The main cause was some rookie architectural mistakes. The kind that works well in prototype but falls apart in real application. I finally diagnosed and fixed the problem. But as development continued, the same patterns kept coming back.

That’s when I changed my strategy. Instead of just fixing the bug, I asked the assistant to write a README file documenting the architectural rules of the project. What went wrong, why it went wrong, and how to avoid it in the future.

These README files are not for me. They are for AI. They act as gatekeepers: use background actors for CloudKit writes, don’t block the main thread, properly filter large queries, and so on. Every time a new feature is created, the assistant returns to these rules. In vibration coding, your README becomes part of the architecture.

Tip 9: Check each line of vibration code before committing

I don’t understand every line of code my AI assistants generate. But I read it all before I made up my mind.

There are two reasons. First, it is the fastest way to learn. Second, you need to be sure that the assistant did only what you intended. Nothing more, nothing less.

Some files are particularly sensitive. For example, your data model. A small change there can have serious consequences. In my case, this might mean deploying schema updates in CloudKit. It’s not something you want to do by accident.

When I see a change that I didn’t expect, I always stop and ask why it happened. More than once this conversation revealed a misunderstanding that could have turned into a costly mistake.

AI writes code. You are still responsible for it.

Tip 10: Treat your AI assistant like a colleague

At this point you may notice a pattern! The easiest way to write better prompts is to stop treating the AI like a code automaton. Treat it like a colleague.

When I work with developers, I don’t just hand over instructions and wait for them to be implemented. We share a goal. We’re talking about limitations. We challenge each other’s ideas. This is how better solutions are created.

The same way of thinking works surprisingly well with AI. Explain why the feature is important. Share context. Explore the options. Ready alternatives. The more you work together, the better the results.

Can you really “motivate” a machine? Maybe not. But AI models are trained on human conversation. When you communicate clearly and thoughtfully, they respond in kind. Vibe coding works best when you collaborate, not command.

Now it’s your turn

A year ago I couldn’t write a Swift app. By the time I submitted Reps & Sets, I understood SwiftUI, CloudKit, and Apple Watch integration much better than I ever expected. I learned by building, with the AI as my collaborator.

Today, Reps & Sets is live on the App Store for iPhone, iPad and Apple Watch. So if you’re wondering what vibration coding can do in the real world, download it and see for yourself.

And if you’ve been sitting on an app idea, this could be your moment.