It took a ridiculously short amount of time to create a simple iPhone app using agent coding

The improved AI agent approach in Xcode has made vibration coding amazingly simple for beginners to the point where some apps can be built without writing any code by hand. Here’s what we found.

The concept of vibration coding is where the user defines to the chatbot or AI agent what to code and the agent codes it for them. It was previously intended to be an extension of the commonly used code design and completion systems you would normally find in a development environment.

Over time and with the increased sophistication of the mentioned artificial intelligence tools, it becomes more and more involved. A person can describe to an agent the general concept of what they want to produce, and they will sometimes come up with features or even complete applications.

These usually don’t behave as intended when the challenge is first created, so there’s usually a period of time where the user either edits the code or asks the AI to fix it for them.

As an idea, it’s not a bad idea because it can help people quickly create lines of code with features that work well enough to be acceptable to the end user.

It is also something that has evolved in many ways. Entities like OpenAI’s ChatGPT and Anthropic’s Claude have tools to help build code, including chat windows, that work in Xcode and other environments.

This is a concept that Apple really wants people to embrace in their work.

Vibing with Xcode

On February 3, Apple updated Xcode to version 26.3, initially in a build available to developers, but with a wider release expected shortly thereafter.

The update increased support for agent coding, allowing OpenAI Codex and Claude to fully integrate with Xcode. Apple made this so that developers can install their preferred chatbot and quickly iterate projects that use it.

This included one-click installations for Claude and Codex, as well as additional functionality for other Model Context Protocol-compatible agents.

Apple has included some new built-in tools specifically with the models in mind. For example, it is possible for the agent to take a screenshot of what the app looks like in the device simulator in Xcode, which can then be used to determine what the agent should change based on the current request.

Models can also search documentation, examine file structures, update project settings, and browse builds and patches.

It sounds like an agent can do a lot for you. To some extent it can, and to a rather amazing extent.

Painless infiltration

I’m already quite familiar with using ChatGPT for coding in Xcode, albeit in a more limited way. Through my game development efforts documented on AppleInsiderI used ChatGPT to edit individual pages of code in Xcode at once.

Other than the Unity export working on the iPad, I didn’t build the entire Xcode project from scratch. It’s also safe to say that while I have some experience with C#, Unity’s scripting language, I’ve never built a native Swift app.

For the purposes of this test, consider me a person who has no idea how coding works where Swift is involved, and is building my first iPhone app.

Installing AI agents in Xcode is simple enough for most users.

After installing Xcode 26.3 release candidate through the Apple Developer Program, I was notified of the new coding agent changes before I could create a new project. When I went through the initial elements, like coming up with a name, I was given a blank project to work from.

At this point I went to the Xcode Settings section and selected Intelligence. This is where the Coding Intelligence section lived, complete with options that allowed agents to use built-in Internet access tools and, more dangerously, to allow agents to use the Bash command line without first asking.

This second option is best suited for inexperienced developers. I immediately imagined allowing the agent to run a command that would completely wipe out my Mac’s storage for no good reason.

That said, it would be handed to Apple not to include some level of railing for what AI can do. While Apple exposes Xcode functionality to agents, Apple ensures that it would be extremely difficult, if not impossible, for things to go wrong.

I chose OpenAI because I have a paid subscription there and was immediately warned that Codex is third party software and will have access to a lot of things if I’m not careful. I quickly agreed, partly because I had made similar arrangements with previous use of ChatGPT.

Once it was installed, I went back into the project and clicked on the star icon in the top left corner to bring up the agent column.

All of this was extremely simple to set up, suspiciously so. Other than straight up power users installing the agent right after launching Xcode, it’s hard to imagine a much simpler and relatively painless installation process.

Mission Agent

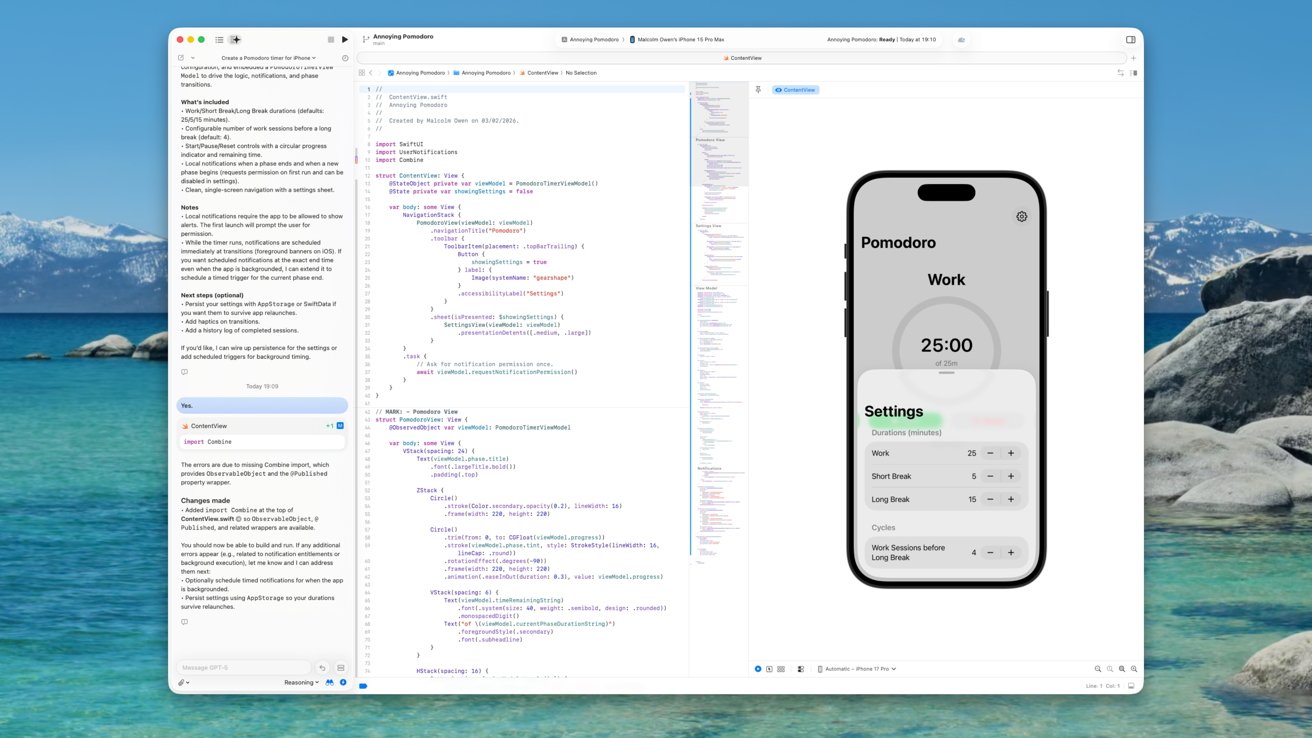

For this test and my first ever Swift project in Xcode, I thought about a very simple app that could take a beginner a few hours to build. An immediate thought was the Pomodoro timer.

With the agent window open, I provided a relatively simple project project and expected more questions.

Hi. I want to make a simple Pomodoro timer for my iPhone. It should provide options to set different time blocks according to Pomodoro principles and then run. An alert should play when it’s time to stop working and start again.

That was quite a challenge, but it was enough to quickly fill Xcode with code. The responses asked if I wanted to add two changes, which I agreed to each time, and I quickly saw an iPhone simulator with a working Pomodoro timer.

An empty project became a brief description of a fully functional application in a matter of minutes.

It had a basic interface, sure, but it also had a settings button. When this was opened, it revealed options to change the duration of work and breaks, the number of work sessions, and even a switch to enable and disable alerts.

Even more surprising, it took less than two minutes from sending the prompt to seeing a working app on my Mac screen.

After a few button presses, the app appeared on my iPhone’s display, as I had previously registered it as a developer device. It worked perfectly too.

To push the technology further, I asked how I would set it up for TestFlight. Create a list of guidelines for how to achieve this, as well as suggestions for other changes that can be made to the app, such as notification screens.

Good vibes

This was a simple test of changes made to Xcode, but one that demonstrates the power of agent coding.

Amazingly, he created a functional, albeit relatively simple, app in just a few minutes. If you know what you want to build and can describe it in text form, it will spit out a version of the app that you can use as a base.

These other changes can also be through prompts if you don’t have a lot of coding experience. But for those who know what they’re doing, it’s a quick way to get to the stage where there’s a basic application that you can then build on.

Coding beginners, and frankly, those who don’t want to know how to code at all, also have the opportunity to build their app ideas quickly and reasonably.

Developers who have experience producing apps and likely know how to best employ AI agents in their workflow should also see this as a positive.

However, this significantly lowers the barrier to entry for app production. This is both a blessing and a curse.

On the negative side, it’s not hard to consider the possibility that people will build a lot of simple apps quickly, without due care and attention. All followed by a shove into the App Store to make some quick cash.

In the coming weeks and months, a flood of simple and very similar apps will hit the App Store Review Guideline reviewers. While this could flood the App Store with quickly built apps, it could be a worthwhile price to pay for allowing more versatile apps to get new and improved features faster.

You may have to wade through a lot more junk apps in the App Store in the future. But agent-based coding could make app gems clearer and better than ever.